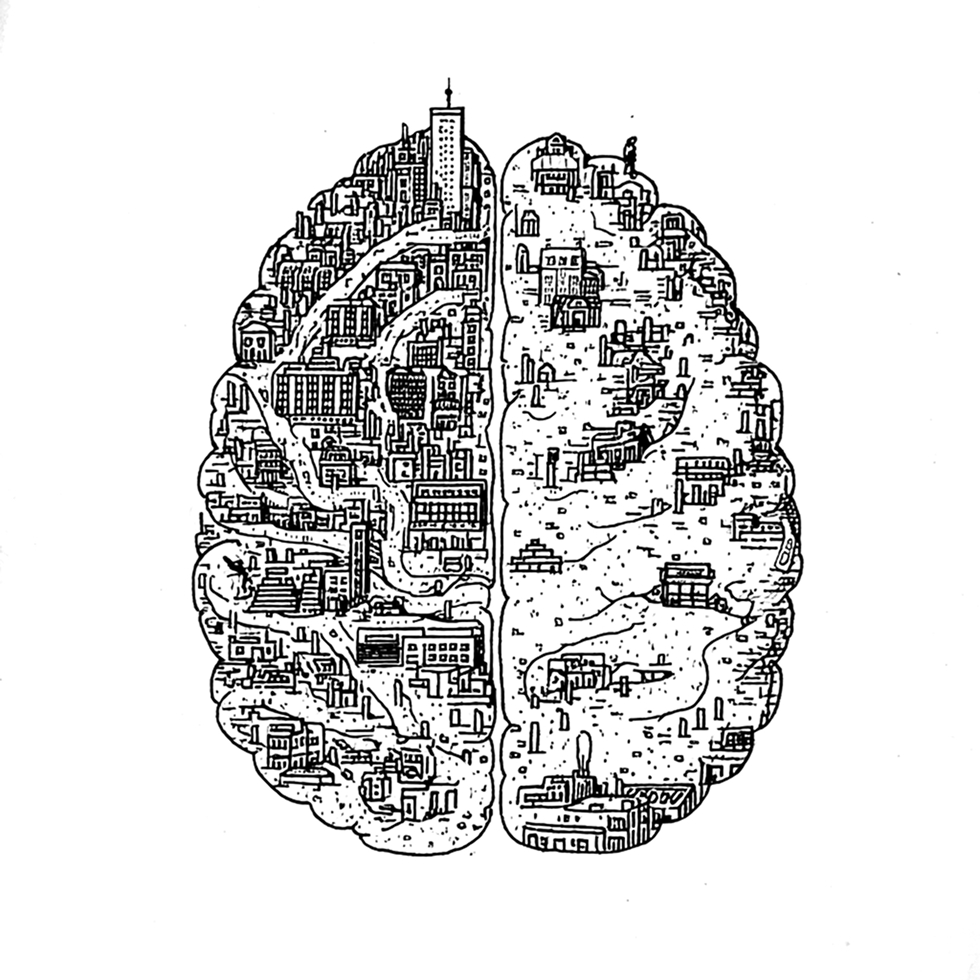

Hippocampus

AI works for individuals.

It breaks at organizational scale.

Most enterprise AI efforts stall in the same place: confident but unverifiable answers, quality that collapses with volume, and knowledge locked into a single vendor's stack. Without an organizational memory you actually control, AI stays a promising demo.

Individual

Every person's AI is its own island. Knowledge does not transfer across people or services.

Limited

No model can hold an entire organization's knowledge in context at once.

Doesn't scale

More data means exponentially higher costs, and answers that get worse, not better.

No human in the loop

AI answers are hard to trust, and harder still to know when to verify.

An external memory layer for organizations, built and maintained by AI.

Documents and connections go in. The AI reads them, summarizes them, and links them into a wiki your team can browse, edit, and trust. Any modern model works: Claude, GPT, Gemini, or open weights.

Instead of brute forcing raw files on every query, Hippocampus understands your documents once, then reuses that understanding every time. Answers come back faster, cheaper, more accurate, and verifiable to the source.

Ingest. Organize. Access.

Ingest

Any documents go in. Contracts, reports, transcripts, code, emails, policies, etc.

Organize

AI reads them once and builds a layered memory: source → summary → index. Tunes itself over time.

Access

Queried by any AI or human. Every answer links back to its source, fully traceable.

"Room for an incredible new product instead of a hacky collection of scripts."

OpenAI cofounder Andrej Karpathy described the same pattern: an AI building a wiki, another AI reading and updating it, humans rarely editing by hand. Hippocampus brings that idea to enterprise scale.

Three things, on every query.

Scalable information

Knowledge is organized in layers: source, summary, index. The model reads the right depth without exploding costs.

Factual, traceable answers

Every response links back to its source. Auditable, not black box.

Built in quality control

AI agents propose and peer review; humans validate the high stakes calls. The 95/5 split that makes the system reliable in production.

Your keys. Your data. Sealed even from us.

Hippocampus runs on your infrastructure: on premise, in your cloud, or fully offline. Any modern LLM plugs in, cloud or self hosted. Switch models tomorrow without rebuilding your knowledge layer.

Access is controlled at every level: each user, and each AI agent, only sees what they should. Guiding principle: you can't leak what you don't know.

See grounded, traceable answers on a corpus you actually care about.

Bring a representative slice: a department's documents, a regulatory archive, a set of contracts. Add a handful of questions you wish AI could answer reliably. We'll show you what fit looks like within a few conversations.