The problems we think about most.

We work on fundamental AI architecture problems: efficiency, interpretability, and reliability. We validate our research through real products. Below you can explore our current research area, Rosetta Embeddings (interpretable embeddings). More topics will be added as our work evolves.

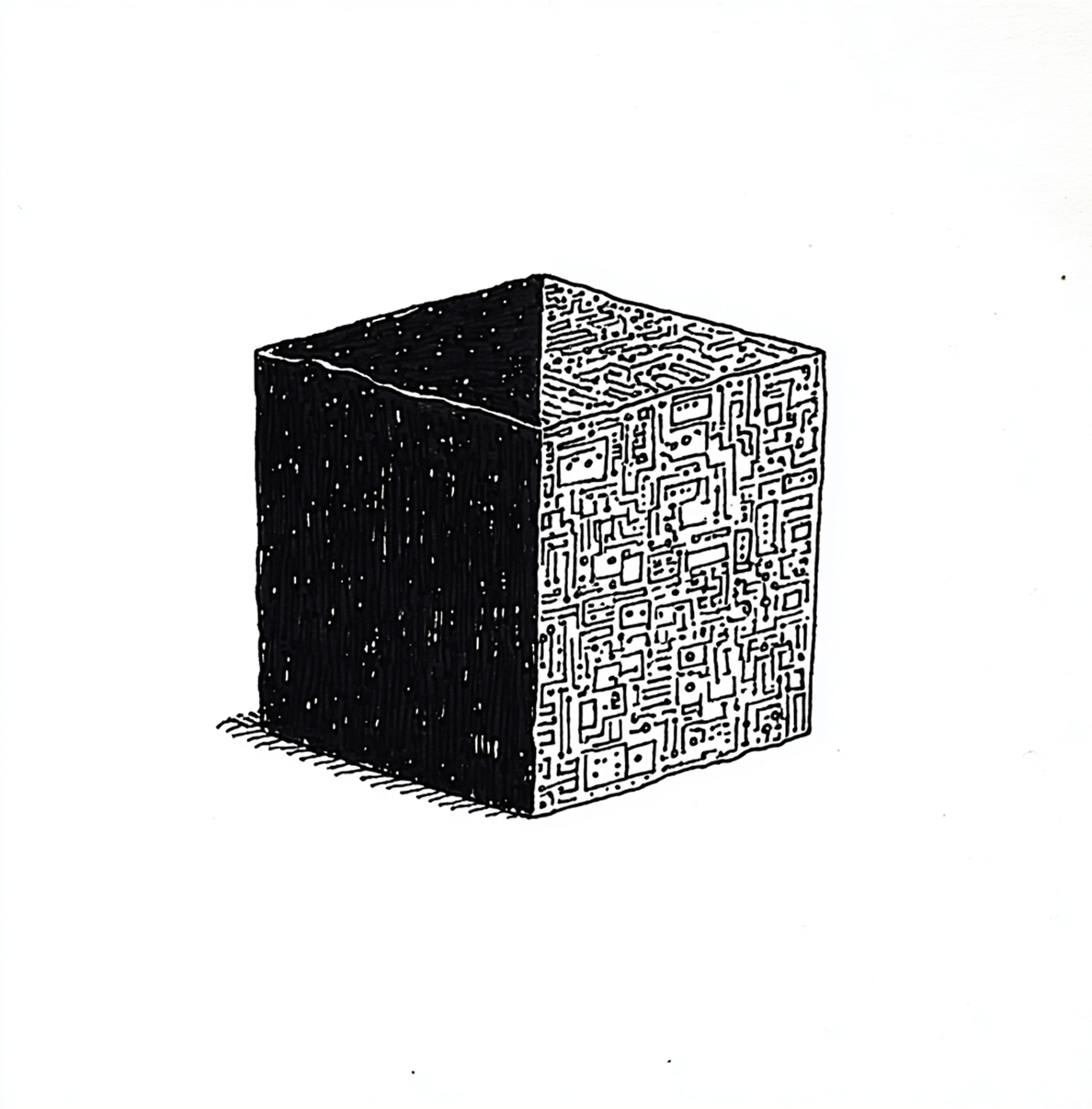

AI's architecture has real constraints.

The transformer model that powers modern AI is remarkable, but it comes with fundamental limitations. The attention mechanism at its core creates quadratic scaling-every token must attend to every other token, which means compute costs grow exponentially with input size.

This isn't just a theoretical concern. It's why AI infrastructure requires enormous energy, why costs spiral as applications get more sophisticated, and why we're hitting practical limits on what we can build.

We're working on solutions that address these constraints at the architectural level, not by adding layers on top, but by rethinking what's underneath.

Rosetta Embeddings: Making AI interpretable.

The problem we're addressing

Standard embeddings are opaque-you put text in, you get numbers out, and you hope those numbers capture something meaningful. This lack of transparency makes it difficult to understand, debug, or customize how AI systems represent concepts.

Our approach

Rosetta makes the embedding process transparent: you can see how concepts are being represented, adjust what's not working, and audit the results. This matters for trust, for customization, and increasingly for compliance. It's also 500–1000× more efficient than running inference through a full LLM.

Where we are

We're actively developing Rosetta and exploring how interpretable embeddings can improve both research and production AI systems. Early results show significant improvements in both efficiency and transparency.